Between April 12-14, the language services industry got together for the rebranded WorldReady conference in Berlin, organized by the Globalization and Localization Association (GALA).

The stated intention was to showcase how individuals and organizations are getting ready for this new, heavily AI-influenced reality.

The program was of high quality and the format well-rehearsed. Our team members, Michalina Krogulecka and Gabriel Karandyšovský, contributed to the proceedings, too (we’ll unpack what they presented in more detail below).

A few things stuck with us after the show. Here’s the short and long of it, in order.

TL;DR

We are collectively getting smarter.

On the one hand, it’s a question of reps — people and organizations have been putting in the work with AI, experimenting and implementing. The limitations of AI are well established. Teams are making AI work all the same.

Frameworks are emerging for how localization teams can successfully lead AI implementation within their organizations. Anti-playbooks are being written, categorizing lessons learned for teams to know what’s coming and what they will need to work on when the CEO inevitably asks, “Have you used AI?”

On the other hand, AI is forcing clarity of vision and demands that companies answer familiar questions: What do we do? How do we do it? Why do we do it?

We may be smarter now than in 2022, but buried under years (and decades) of organizational sediment, these questions often don’t have clear-cut answers, forcing the language service industry to reckon with a different reality: What if the way forward is to be more intentional in our judgment and choice, including our application of technology?

Intentionality was the keyword that permeated the proceedings at WorldReady Berlin, though few stated it out loud.

2026: the year of the pragmatists

We have now firmly moved from hype (though it remains strong in some pockets of the industry) to pragmatism — 2026 belongs to people and teams that are building solutions with or around AI.

That said, many teams are still dealing with the leftovers of the hype: Yes, buyer-side localization departments have a clear mandate to use AI. But they often operate in environments without clear rules or proper AI governance. Every department uses AI differently. Many use it to create and translate content outside of sanctioned processes. Used this way, AI is producing inefficiencies and waste. Much of this is down to every company simply being at a different stage of its maturity and figuring it out on the fly.

The challenge for localization teams is to mitigate the risks of AI use — and, in doing so, prove they deserve a seat at the table.

Judging by the stories shared in Berlin, they’ve been largely up for the task.

Some of the show highlights included:

- Elitza Dublewa-Servatius of Coca-Cola Europacific Partners shared how her company pivoted to a centralized, AI-assisted platform to address fragmented internal demand for translation. The secret ingredient has been meeting internal users where they work, the technology seamlessly integrating into the varied departments’ workspaces. The result? Less waste, more consistent and prompt translation, and increased internal user satisfaction.

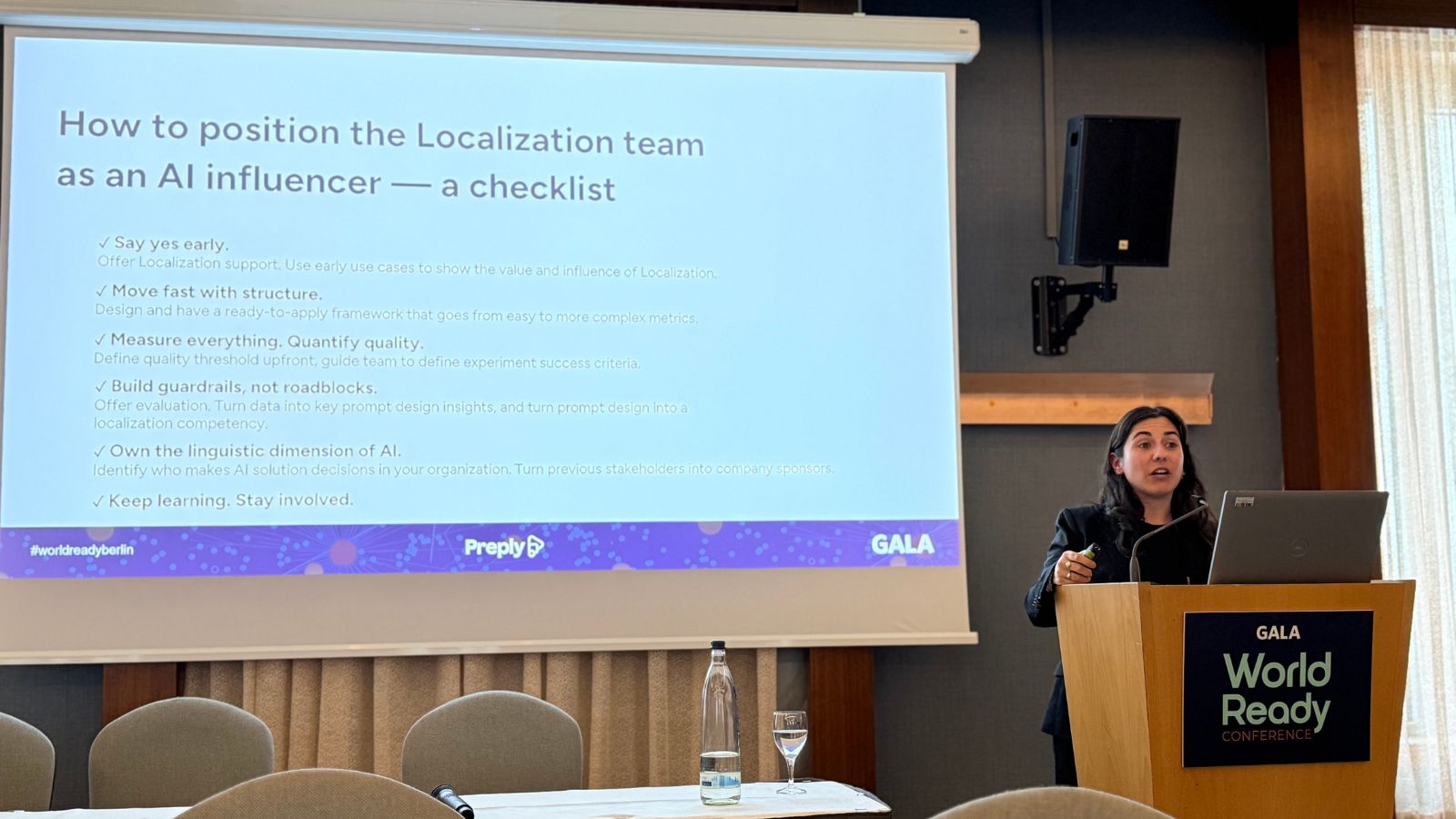

- Marta Nieto Cayuela and Verena Siegel of Preply showcased how their team wilfully repositioned localization as an internal influencer, helping to co-steer decision-making on AI. Their story speaks to the reality of many localization teams that are having a tough time getting a seat at the table. Now that companies are having an A-ha! moment with AI, realizing its output is not foolproof, the lessons localization teams have learned building QA systems can now be used to build guardrails and create more responsible AI.

- Sam Gibbs from Shure and Vera Malysheva from TeamViewer spoke about the realities of the C-level handing down the order to investigate AI: The C-level’s awareness of AI and attitudes towards it condition how successful the company will be in its implementation. Sam’s and Vera’s testimonies converged on one point: The less prescriptive the C-level is, the more room the localization teams have to step up and become the glue holding the organization’s different functions together. After all, the current crop of LLM-based technology is very much language-focused, and language is what our industry is expert at.

- Chandana Gobbi from Zoetis showcased a proof of concept they developed using an AI agent that analyzes source content, flags terminology that machine translation (MT) may not handle appropriately, and solicits subject matter experts (SMEs) upstream where needed. This has enabled them to significantly reduce the SME review stage and speed up time-to-market. It may be just a proof of concept, but it shows that AI can also work in highly regulated environments — as long as it is deployed thoughtfully and within tightly orchestrated workflows.

An undercurrent of the various talks is the idea (albeit not necessarily novel) of client-side localization teams and technology progressively reaching a symbiotic state, i.e., humans learning to use the technology in ways that don’t disrupt the core mission of delivering content to global audiences. As teams and organizations mature, this is becoming more commonplace.

If this is the outcome, language technology companies are the natural allies in their clients’ efforts to embed AI into workflows. There are a couple of reasons for this: They have the technical know-how to help clients who are strapped for bandwidth and lacking the necessary in-house knowledge. Secondly, language technology continues to mature, with providers developing AI-powered solutions to address traditional pain points such as fragmented sources of truth, a lack of context and metadata, and the processing of large volumes at the scale required by modern global enterprises. There is more appetite for pointed, highly specific (or niche) solutions rather than off-the-shelf tools.

What does intentional mean to you?

The varied talks and presentations circled a word that not many deliberately said out loud: intentionality.

Attempting to implement AI has forced companies to reassess what they’ve been doing and how. The proof is in the pudding — AI (the current LLM-powered version) does have a place in localization workflows. But all of these specific, unique, or niche applications and workarounds that teams develop just show how important it is to be intentional about how technology is used.

When guided by human experts and deployed thoughtfully, the technology allows for extraordinary results.

But there’s one more question teams should try to answer: What is the purpose of what they are trying to achieve? It can’t all be about the pursuit of efficiencies, can it?

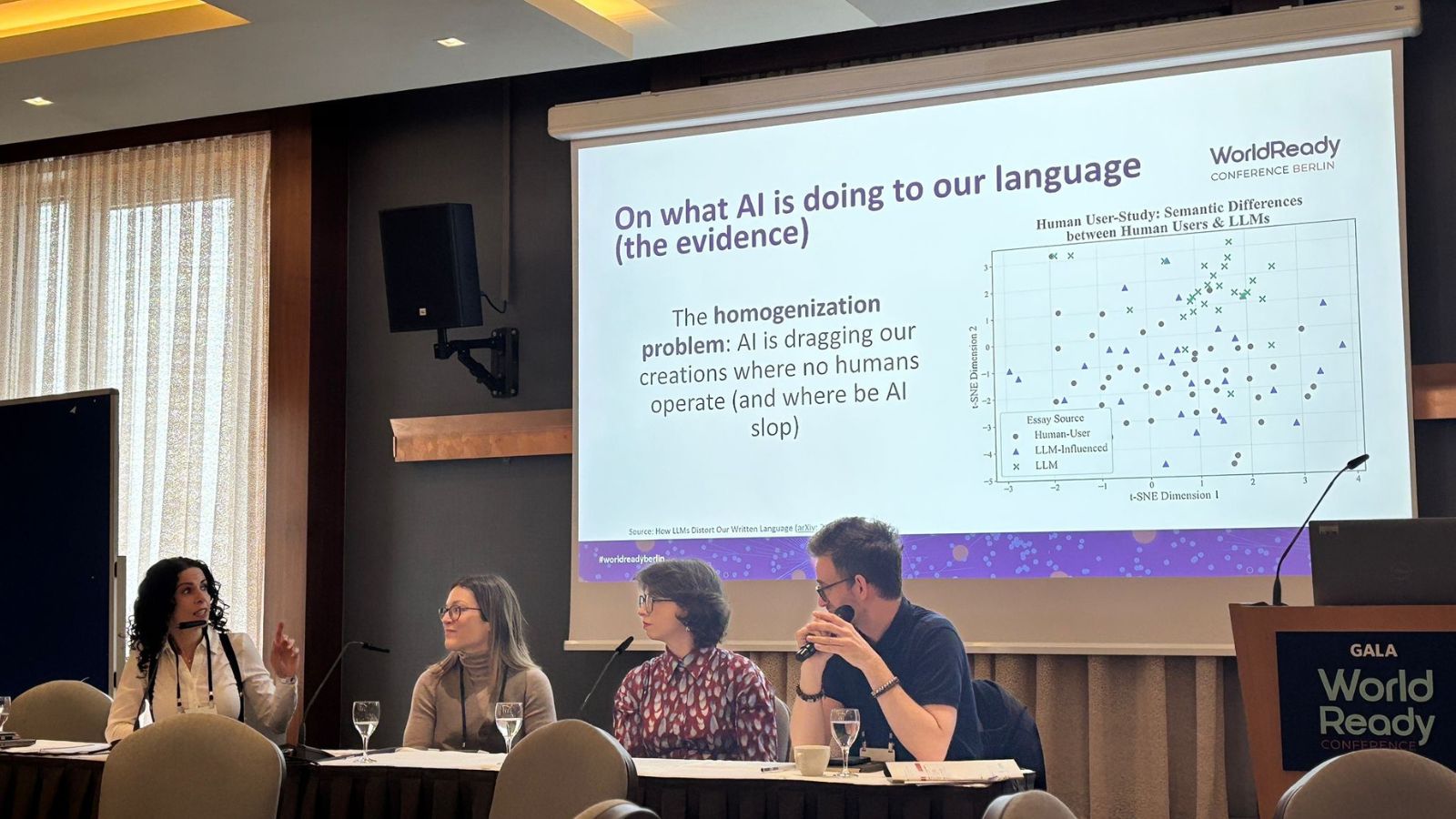

The panel moderated by Argos’ Gabriel Karandyšovský and fellow panelists Olga Stokowiec (Maxon), Marina Pantcheva (RWS), and Belén Agulló García (Terra) revealed another facet of reality, now backed by research: AI influences our language. Using AI to create content results in bland outputs that do not resemble anything a regular human would produce. Relying too heavily on AI risks homogenizing the language.

Now, if you consider the scale at which the world is flocking to LLM-powered tools, there is cause for concern:

- How do brands stand out in the sea of uniform, average, mass-produced AI-generated content? There is a real risk that AI flattens a brand’s unique voice.

- What other, mostly implicit, risks are they incurring? Frontier models are built on English and are demonstrably biased. Using them may perpetuate harm if proper guardrails are not in place and the expertise and craft of humans continue to be devalued.

Ultimately, each brand will need to reach its own conclusions on how to use AI thoughtfully. And it is already happening. Here’s to more companies leading with intent first!

Speaking of intent, Argos’ Michalina Krogulecka, together with Ines Albert (Büro Albert GbR), explored another facet of the AI-first reality: how AI is used to combat AI fraud in the context of recruitment processes. Their conclusion? No matter how much technology you use, human oversight is still indispensable. Human judgment is irreplaceable.

Read the April edition of our Global Ambitions newsletter, where we explore both Michalina’s and Gabriel’s sessions in further detail.

Scaling intentionality today and tomorrow

On balance, WorldReady Berlin, as well as the broader industry discourse, is slowly moving from topics of fit to those of governance and building and using AI responsibly.

WorldReady brought a new round of testimonies on how companies are making AI work. AI can be piloted and implemented (though it’s certainly not an instantaneous process and requires substantial resources).

As AI deployments scale across more markets and languages, questions of intent and meaning become harder to avoid.

They require answers before you hit the Generate/Publish button.

Argos Multilingual

17 min. read

Argos Multilingual

17 min. read

Shadow Localization: When Teams Translate Without You — And What to Do About It “Shadow localization” occurs when teams within an organization begin handling their own translation and localization work outside the established localization function. This phenomenon predates AI — siloed teams have long operated independently, sometimes unaware that a centralized localization team exists — […]

Argos Multilingual

4 min. read

Argos Multilingual

4 min. read

Adding a new locale to a software product takes time, money, and significant coordination. Even when that locale is a dialect variant of a language you already support, many teams treat it as a new project, but it doesn’t have to work that way. When HiBob, a global HR platform, expanded into Spain, its European […]